How AI-powered security is solving yesterday’s problem while tomorrow’s is already here.

When Anthropic announced Claude Code’s security capabilities, the reaction across the industry was immediate and justified. AI that can scan your codebase, identify vulnerabilities, and suggest fixes in real time is a genuine leap forward. For teams that have spent years manually triaging CVEs and chasing injection flaws across sprawling codebases, this feels like the moment the tide finally turns.

And in many ways, it is.

But there’s a question worth sitting with: when AI locks down the code layer, where does the risk go?

The answer is uncomfortable – and mostly ignored.

The Progress Is Real. So Is the Blind Spot.

Let’s give credit where it’s due. Application security has historically been a game of whack-a-mole. Developers ship fast. Vulnerabilities slip through. Security teams scramble to patch, prioritize, and communicate risk to stakeholders who want everything fixed yesterday. Static analysis tools helped, but they were slow, noisy, and required expertise to interpret. Penetration testing was episodic. Bug bounties were reactive.

AI changes the economics of all of this. Continuous, intelligent scanning embedded in the development workflow means vulnerabilities get caught earlier, fixed faster, and with less human overhead. Injection flaws, misconfigurations, dependency risks – the class of problems that have dominated the AppSec conversation for decades are becoming increasingly addressable through automation.

This is meaningful progress. Fewer CVEs reaching production is unambiguously good.

But security doesn’t operate in a vacuum. When you close one door, attackers don’t give up – they find the next one. And right now, while the industry’s attention is concentrated on making code safer, a different attack surface is quietly expanding.

The Attack Surface Is Shifting – Into Identity

Here’s what’s actually happening underneath the surface of the AI-driven development boom.

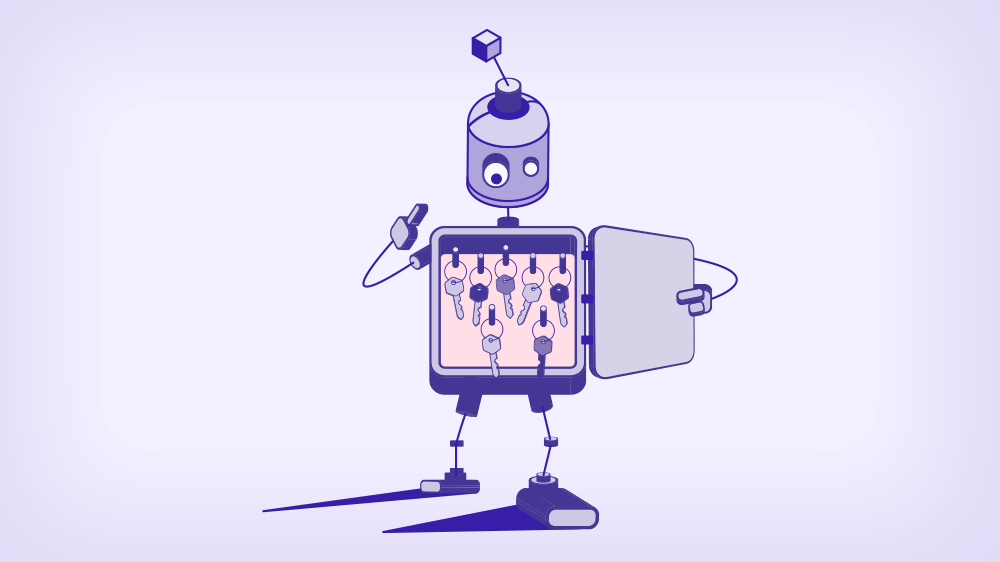

Every AI agent, every automated pipeline, every microservice, every cloud workload needs to authenticate somewhere. It needs to talk to a database, call an API, read from storage, write to a queue. And to do any of that, it needs credentials.

In most enterprises today, those credentials are static secrets – API keys, service account tokens, long-lived passwords – stored in vaults, hardcoded in repos, or passed around in environment variables across teams and systems. This was already a fragile approach before AI entered the picture. Now, as organizations race to deploy AI agents at scale, the problem is compounding faster than most security teams can track.

Consider what AI-native development actually produces at the infrastructure level:

More services. More integrations. More automated pipelines. More workloads spinning up and down at runtime. Each one requiring access. Each one representing a potential identity that needs to be managed, monitored, and governed.

The code is getting cleaner. The identity layer is getting messier.

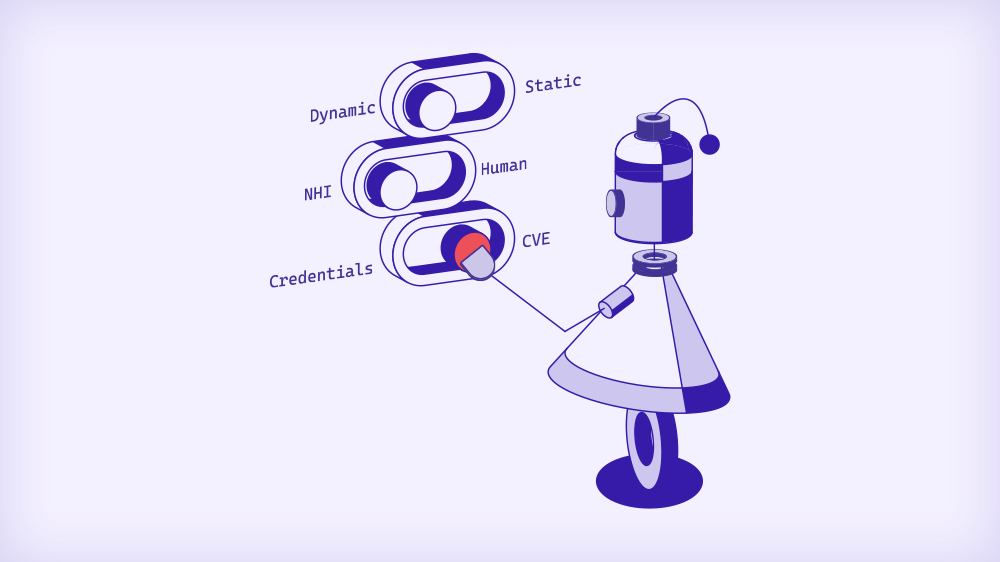

Three Shifts Nobody Is Talking About Loudly Enough

From CVEs to credentials. The vulnerability classes that have dominated security discourse for years – SQL injection, XSS, buffer overflows – are precisely the things AI is best positioned to catch. But the credentials that authenticate the services running that cleaner code? Those are largely outside the scope of AppSec tooling. A perfectly patched application running on an over-privileged service account with a two-year-old API key is still a serious risk. The surface has shifted, not shrunk.

From human to non-human identity. For most of security’s history, identity meant users. You managed employees, contractors, admins. You enforced MFA, monitored login anomalies, revoked access when people left. That paradigm is breaking down. In modern cloud environments, non-human identities – service accounts, machine tokens, OAuth credentials, AI agents – already outnumber human users by orders of magnitude in most organizations. And they’re growing faster. Every new AI agent means dozens(!) of new identities. Every new integration is a new credential. The attack surface is predominantly non-human, and most security architectures were built for a world where it wasn’t.

From static to dynamic. Traditional secrets management was built on a simple assumption: store the credential securely, rotate it periodically, and you’ve done your job. That model made sense when environments were relatively stable. It doesn’t make sense in a world of ephemeral workloads, containerized microservices, and AI agents that spin up, complete a task, and disappear within minutes. A static secret issued to a workload that lives for 30 seconds doesn’t need to be valid for 90 days. But in most organizations, it is – because the infrastructure to do anything different doesn’t exist.

Why the Vault and Scanners are Not Enough

Secrets managers have been the industry default for non-human identity management for good reason. They’re better than hardcoded credentials. They provide centralized storage, access controls, and rotation capabilities. For a long time, they were enough.

But the enterprise environment has outgrown them in ways that are now impossible to ignore.

Vaults are excellent at storing secrets. They’re not designed to answer the questions that matter most in a dynamic, AI-driven environment:

- Where is this credential actually being used?

- Which workload is consuming it right now?

- Is it scoped to the minimum permissions needed for this specific task?

- Has it been sitting dormant for six months because the service it was created for was deprecated and no one noticed?

Discovery tooling has emerged to fill part of this gap – scanning environments for unmanaged credentials, mapping blast radius, surfacing hardcoded secrets in codebases. These tools are valuable. But they’re diagnostic, not prescriptive. They tell you what the mess looks like. They don’t fix it, and they don’t prevent the next one.

The “secretless” architecture vision attempts to eliminate the problem at the root – replacing credentials entirely with identity-based access that doesn’t require a secret to be stored or transmitted. It’s the right destination. But it requires deep re-architecture, significant investment, and migration timelines that most enterprises – especially those in heavily regulated industries with complex legacy environments – simply can’t absorb all at once.

Which leaves most organizations caught between approaches, piecing together a vault, a discovery tool, and some governance layer, and hoping the combination is sufficient.

It usually isn’t.

What the Agentic Era Actually Demands

The deployment of AI agents isn’t a future scenario. It’s happening now, in production, at Fortune 500 companies across every industry. And the security implications are arriving faster than the frameworks to address them.

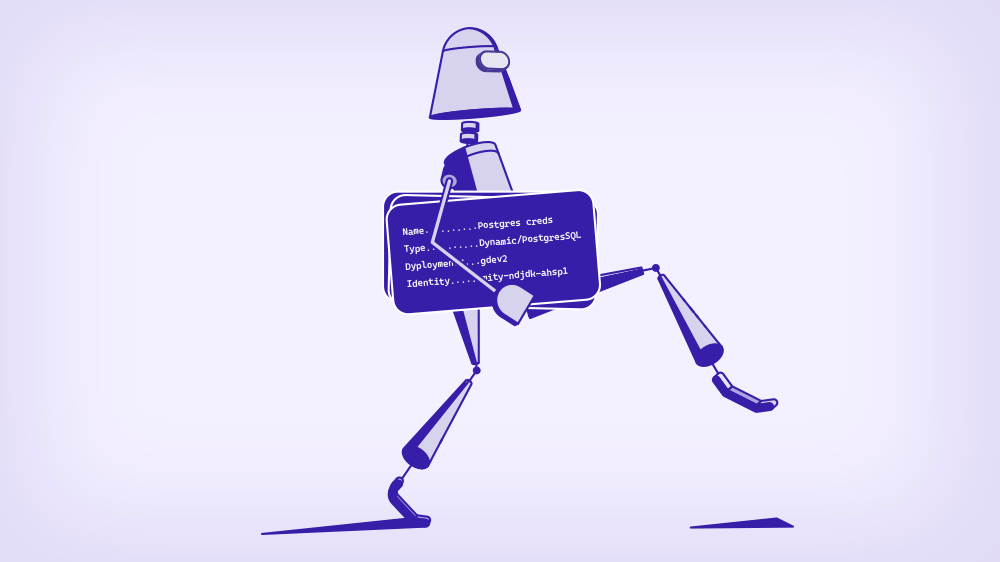

An AI agent is, from an identity and access perspective, a workload like any other – except it tends to require broader access, operate more autonomously, and interact with more systems than a traditional service. It might need to read from a database, call external APIs, write to a content management system, trigger downstream workflows, and authenticate to multiple internal services, all within a single task execution.

If that agent is operating with a long-lived credential scoped with more permissions than it needs – which is the current reality in most organizations – the blast radius of a compromise isn’t just the agent itself. It’s every system the agent’s credentials can reach.

This isn’t hypothetical. The attack patterns are already emerging. Credential theft targeting automated pipelines. Privilege escalation through over-permissioned service accounts. Lateral movement through interconnected AI workflows. The techniques aren’t new. The scale and speed at which they can be executed against a fragmented, poorly governed non-human identity landscape is.

What the agentic era demands isn’t a better vault or a smarter scanner. It demands a fundamental rethinking of how access is granted, governed, and revoked for non-human identities at runtime.

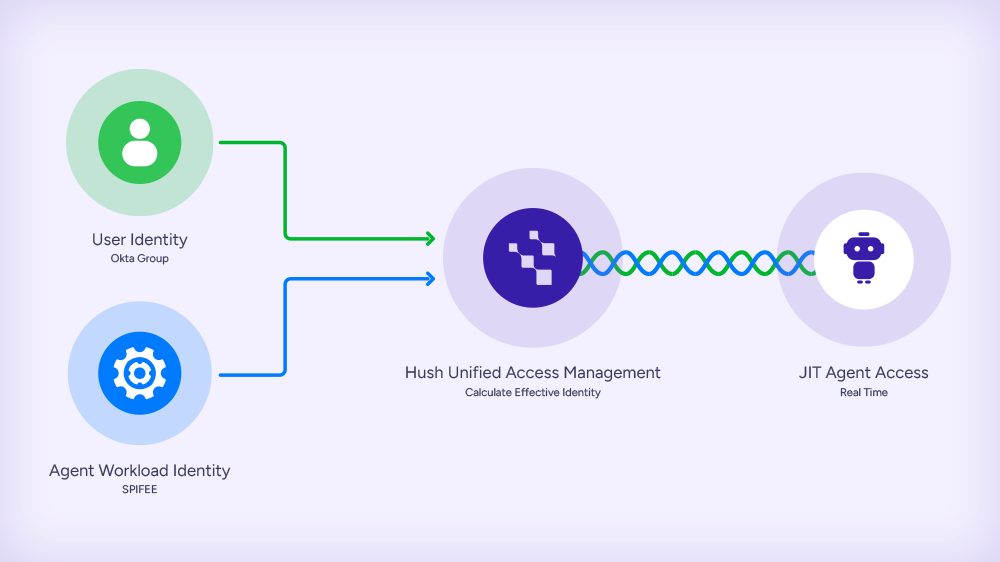

Specifically, it demands just-in-time access – where credentials are issued for the duration of a specific task, scoped to the minimum permissions required, and automatically revoked when the task completes. No long-lived tokens. No standing privileges. No credentials that outlive the workload they were created for.

It demands unified visibility – a single control plane that spans cloud, hybrid, and on-premise environments, giving security teams a real-time view of every non-human identity, what it has access to, and what it’s actually doing.

And it demands a path that works now – not after a multi-year re-architecture project. Organizations need to be able to reduce risk against their existing infrastructure while building toward a least-privilege, identity-based future. These goals aren’t mutually exclusive, but achieving both requires a platform designed with that tension in mind.

The Winners Will Own the Control Plane

The non-human identity market is crowded and consolidating fast. Vault vendors are expanding their feature sets. Discovery tools are adding governance layers. AppSec platforms are moving into runtime. Everyone is racing toward the middle.

The vendors who win this space won’t be the ones with the best scanner or the smartest vault. They’ll be the ones who unify discovery, storage, and governance into a single control plane – replacing static secrets with just-in-time, identity-based access, across cloud, hybrid, and the legacy systems everyone knows exist but no one wants to talk about.

That’s not a minor product improvement. It’s a different architectural thesis. And the enterprises that figure this out early – that start treating non-human identity with the same rigor they’ve applied to application security – will be the ones operating from a position of strength as the agentic era matures.

The Target Hasn’t Disappeared

AI-powered application security is a genuine advancement. The code layer is getting safer. CVEs are getting caught earlier. Injection flaws that have plagued development teams for decades are increasingly solvable through automation.

But the attack surface hasn’t shrunk. It’s shifted – into workload identity and runtime access. Into the credentials authenticating the AI agents, microservices, and automated pipelines that now form the operational backbone of modern enterprises.

Less vulnerable code. More long-lived credentials. Fewer injection flaws. More over-privileged service accounts. Better applications. More unmonitored AI agents with unchecked access.

The security architecture that got us here was built for a world of human users, monolithic applications, and static environments. It’s not the architecture we need for a world of AI agents, ephemeral workloads, and dynamic, multi-cloud infrastructure.

The shift is already happening. The question is whether security teams will recognize where the new perimeter actually is – and build toward it – before the attackers make it impossible to ignore.